My Services

Specialized Expertise in Demographic Analysis

I excel in providing precise and reliable interpretations of complex demographic data through my advanced analytical skills, including predictive modeling and customized research.

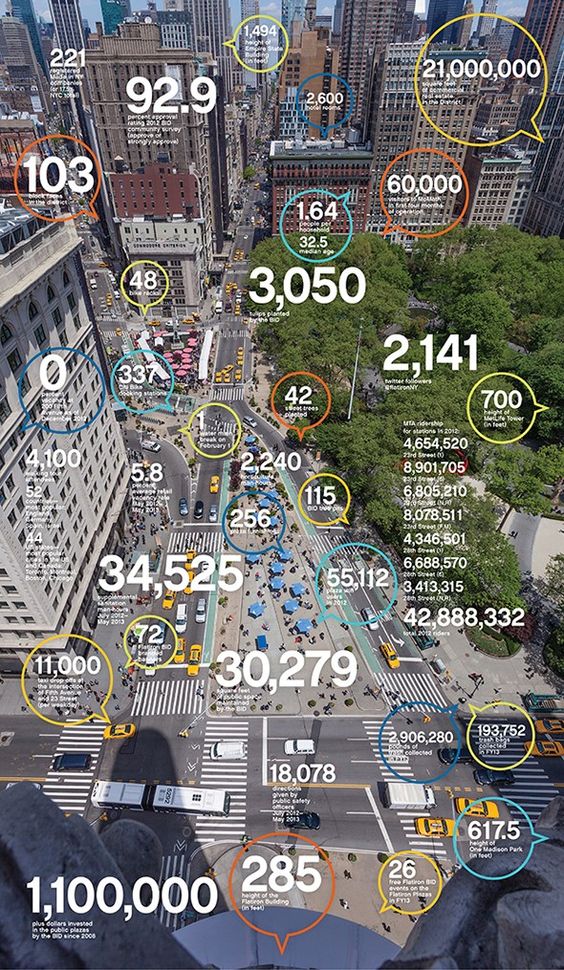

Data Visualization Expertise

I specialize in creating detailed and compelling data visualizations that transform complex information into clear, easily understandable insights.

Ready-to-Learn Tutorials

Dive into my extensive library of tutorials, where you can download comprehensive guides designed to help you master the R programming language, STATA, and Tableau visualization.

My Skills

If you don’t quite understand what I do or how I can help you, here’s a quick rundown of my skills. I am highly skilled in various data analysis and visualization tools, ensuring I provide comprehensive solutions to your needs.

Demography is the foundation of all policy. It’s the study of how populations change, and those changes influence every aspect of our lives.

ABOUT ME

I am an expert in data analysis, the visual display of information, and the development of reports for a wide range of audiences.

The hallmark of my expertise lies in my ability to weave together a diverse array of potentially relevant information—including demographic, social, and economic data. This holistic perspective allows me to deliver analyses that are not only thorough but also deeply insightful, helping clients navigate complex demographic landscapes with confidence.